When the Insurers Become the Regulators: How AI Infrastructure Debt Is Rewriting Safety Rules

In January 2026, a quiet revolution began in the basement levels of global finance: insurance companies started pricing data center downtime like hurricane damage.

In January 2026, a quiet revolution began in the basement levels of global finance: insurance companies started pricing data center downtime like hurricane damage. Not because regulators told them to—but because the math of AI infrastructure left them no choice.

What followed wasn't oversight. It was underwriting.

And in the language of actuaries and bond prospectuses, a new form of systemic risk emerged—one where the safety standards for the AI boom are being written not in Washington or Brussels, but in the risk models of Lloyd's, Munich Re, and Swiss Re.

The Mismatch No Balance Sheet Can Hide

By late 2025, the numbers became impossible to ignore. Technology companies issued a record $108.7 billion in corporate bonds in just three months—a figure roughly double the previous quarter and extending into 2026 with another $15.5 billion in the first two weeks alone. This wasn't funding for software licenses or cloud subscriptions. It was brick, mortar, steel, and silicon: the physical buildout of AI infrastructure at unprecedented scale.

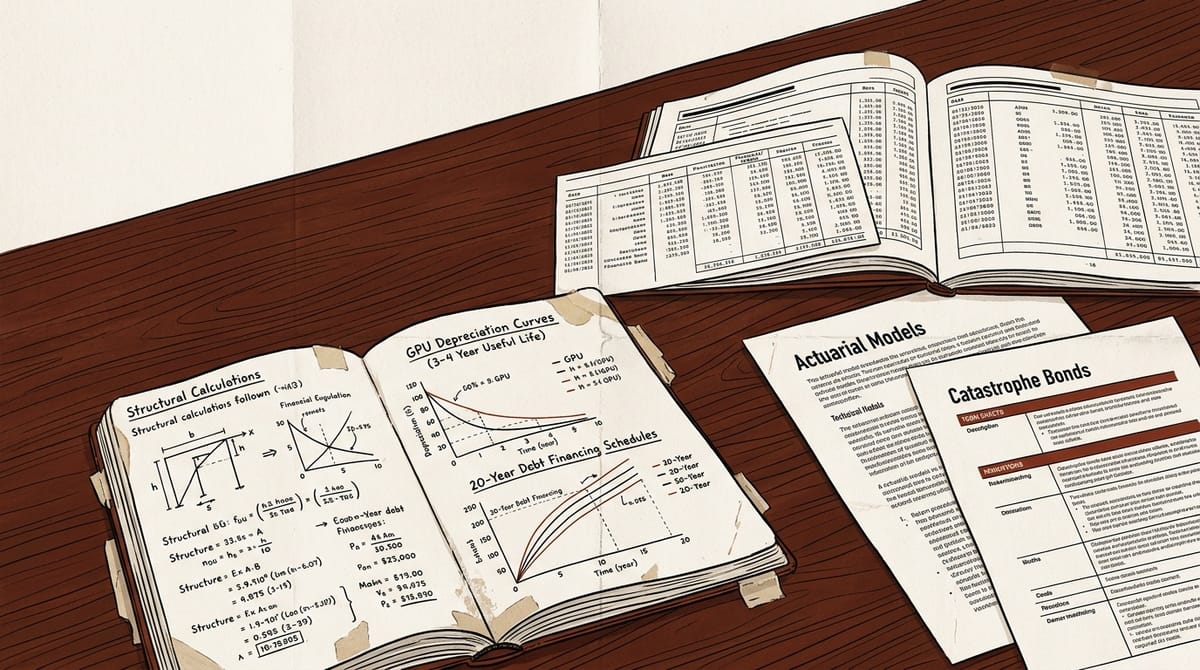

But here's what the prospectuses don't advertise: nearly two-thirds of that capital isn't going into buildings or land with 50-year horizons. It's going into graphics processing units (GPUs) and servers with useful lives measured in years, not decades—yet being financed with debt instruments structured like real estate mortgages.

When you finance assets that depreciate in 3-4 years with loans lasting 15-20 years, you don't get infrastructure. You get a maturity mismatch waiting to happen. The 2008 financial crisis had a name for this: borrowing long to lend short. Today, it's happening in AI's backbone, where NVIDIA's H100 chips are already being displaced by H200s and GB200s while the debt used to buy them remains outstanding.

The Insurance Gap That Became a Chasm

Enter the insurers. Traditional property and casualty policies were never designed for this. When AI first entered enterprise workflows, insurers responded not by creating new coverage but by adding AI exclusions to existing cyber, errors-and-omissions, and general liability policies. The logic was clean: these policies were priced for human error and mechanical failure, not for systems that can hallucinate regulatory filings at scale or execute flawed autonomous actions without human oversight.

That exclusion wave created a vacuum. Enterprises deploying AI found themselves suddenly uninsured for the very technology they were betting their strategic roadmaps on. Procurement stalled. Legal teams put guardrails on deployment. The gap between AI capability and deployable AI capability widened—not because the technology failed, but because the safety net vanished.

Into that void stepped affirmative coverage: insurance priced on actual AI risk rather than analogy, structured around requirements that forced companies to build safety infrastructure they would otherwise defer until regulators arrived.

Three products now define this architecture:

- Performance warranties (like Armilla's "Guaranteed") that trigger payouts if model KPIs drop below verified thresholds

- Vendor-side protection (expanded by Google Cloud with Beazley and Chubb) that uses insurance-backed indemnification as a competitive enterprise sales wedge

- Agent certification (the AIUC-1 standard from the Artificial Intelligence Underwriting Company) covering six pillars of AI agent safety with consortium backing from Anthropic, Google, and Meta

Here's what most analyses miss: these products aren't primarily about risk transfer. They're about using underwriting as a safety enforcement mechanism. An insurer can't price AI risk without audit trails, test suites, behavioral benchmarks, and deployment monitoring. That requirement—forces companies to build the safety systems they would otherwise ignore.

Michael von Gablenz of Munich Re compares this to seat belts: insurance created the economic incentive, regulation formalized it later. Rajiv Dattani at AIUC sees parallels to Benjamin Franklin's Philadelphia Contributionship, which required fire-safe buildings for coverage and effectively created proto-building codes two centuries before municipal fire codes existed.

When the Market Sets the Standard

But AI infrastructure operates on a different timescale—and with different collateral—than traditional insurance models are built to handle.

Consider the data center itself. When insurers write builders' risk policies, they're used to valuing structures that appreciate or hold value over decades. In AI data centers, the real estate might be worth $500 million, but the GPUs inside it? Easily $35 billion. The specialization creates an aggregation nightmare: a single site housing thousands of companies' AI workloads means insurers aren't just covering one risk—they're layering thousands of identical exposures on top of each other, a concentration no traditional model was built to calculate.

And then there's the secondary market problem. In 2008, when mortgages defaulted, there was at least a liquid market for houses. Prices fell, but recovery happened. For GPUs? There is none. If a lender has to liquidate $2 billion in AI chips in a default scenario, they'll find near-zero buyers. Recovery value collapses instantly—not because the chips are worthless, but because there's no mechanism to discover what they're worth in stress.

This isn't theoretical. It's showing up in lending terms. GPU-backed loans routinely run at 75% loan-to-value—meaning lenders are exposed if GPU values drop more than 25%. Given the three-to-four-year useful life of today's H100s versus the 15-20 year terms of the debt financing them, that buffer is vanishingly thin.

The Spreading Risk Nobody Wants to Name

The danger isn't just technical. It's financial—and it's contagious.

When pension funds and insurance companies buy asset-backed securities (ABS) and commercial mortgage-backed securities (CMBS) tied to AI infrastructure leases, they're doing so because these instruments match their long-duration liabilities. But what happens when the underlying asset—a GPU server rack—becomes obsolete before the lease term ends?

The distress doesn't stay isolated. It migrates up and down the capital stack. A default on one facility can trigger cross-defaults across interconnected obligations, impairing the ability to make lease payments to special purpose vehicles (SPVs), which then undermines the cash flows backing the ABS and CMBS products held by pensions, insurers, and endowments.

Regulators are noticing. In January 2026, four U.S. Senators sent an open letter urging investigation of the sector's growing reliance on "complex and opaque debt markets" to borrow "staggering sums of cash," warning that an inability to service this debt "could cause destabilizing losses for an interconnected set of financial institutions, triggering a broader financial crisis that harms the economy."

The Federal Reserve and Bank for International Settlements have echoed these concerns, watching closely as AI-related debt issuance accelerates—not because they doubt the technology, but because they see the financial structure growing increasingly fragile beneath it.

The Profit Motive That Might Save Us

Here's the twist: the market might solve what regulation could not—not out of altruism, but because uninsurable risk doesn't scale, and unscaled risk doesn't generate premiums.

Insurers aren't building AI safety frameworks because they believe in safety as an abstract good. They're doing it because if AI systems can't be insured, they can't be deployed at the scale needed to justify the debt used to build them. And if they can't be deployed at scale, the premiums disappear.

This mercantile driver has historically proven more reliable than moral appeals. Fire insurance didn't just pay for rebuilding after London burned in 1666—it created the economic incentive for Nicholas Barbon to fund the city's first fire brigades because insuring a risk they couldn't mitigate was mathematically suicidal. The brigades that protected London weren't built by Parliament. They were built by underwriters who needed their bets to win.

Today, the same pattern repeats. When institutional capital starts pricing data center downtime like a hurricane, international regulatory and liability frameworks will inevitably follow—not because lawmakers had a sudden change of heart, but because the market had already built the architecture they're now being asked to ratify.

The Unasked Question About Tomorrow's Infrastructure

The emergence of AI infrastructure insurance isn't evidence that the problem is solved. It's evidence that the problem has been financialized—and financialized problems get solved only where profit exists from solving them.

The question isn't whether insurance will replace regulation. It's whether the safety infrastructure that underwriters demand—audit trails, behavioral benchmarks, real-time monitoring, deployment controls—will be robust enough to survive the moment when regulators finally arrive and decide the market's answer wasn't sufficient.

Building codes born from fire insurance protected structures for centuries. The question for AI is whether the codes being written in actuarial spreadsheets and bond covenants today will outlast the generation of models that prompted their creation—or whether, when the next technological shift arrives, we'll find ourselves once again standing in the ashes, wishing we'd built the fire brigades sooner.

--- *Sources: Moody's Analytics (Q4 2025 debt issuance), New Orleans City Business (Jan 2026), Finance-Commerce (Jan 2026), Quinn Emanuel Urquhart & Sullivan litigation risks memorandum (2025), A-Invest data center debt analysis (Dec 2025), Artemis.bm catastrophe bond reporting (Jan-Feb 2026), Tony Grayson AI infrastructure financing risks analysis (Jan 2026), Marketplace "too big to fail" coverage (Jan 2026), ITiger insurance capacity report (Nov 2025), International Association of Insurance Supervisors Global Insurance Market Report (Dec 2025), Insurance Journal insurance sector analysis (Feb 2026), Door3 AI in reinsurance catastrophe planning (Oct 2025), Racket News AI finance risks analysis (Dec 2025), Indium Technology catastrophic risk management whitepaper.*

See also: The Underwriters Are Writing the Rules No Legislature Passed for how insurance is already shaping AI safety standards in related domains.