Coupang and the New Geography of AI Jurisdiction

The next AI sovereignty battle may not start with a model ban. It may start when a country decides the platform that knows its consumers best still has to answer locally for where that knowledge goes.

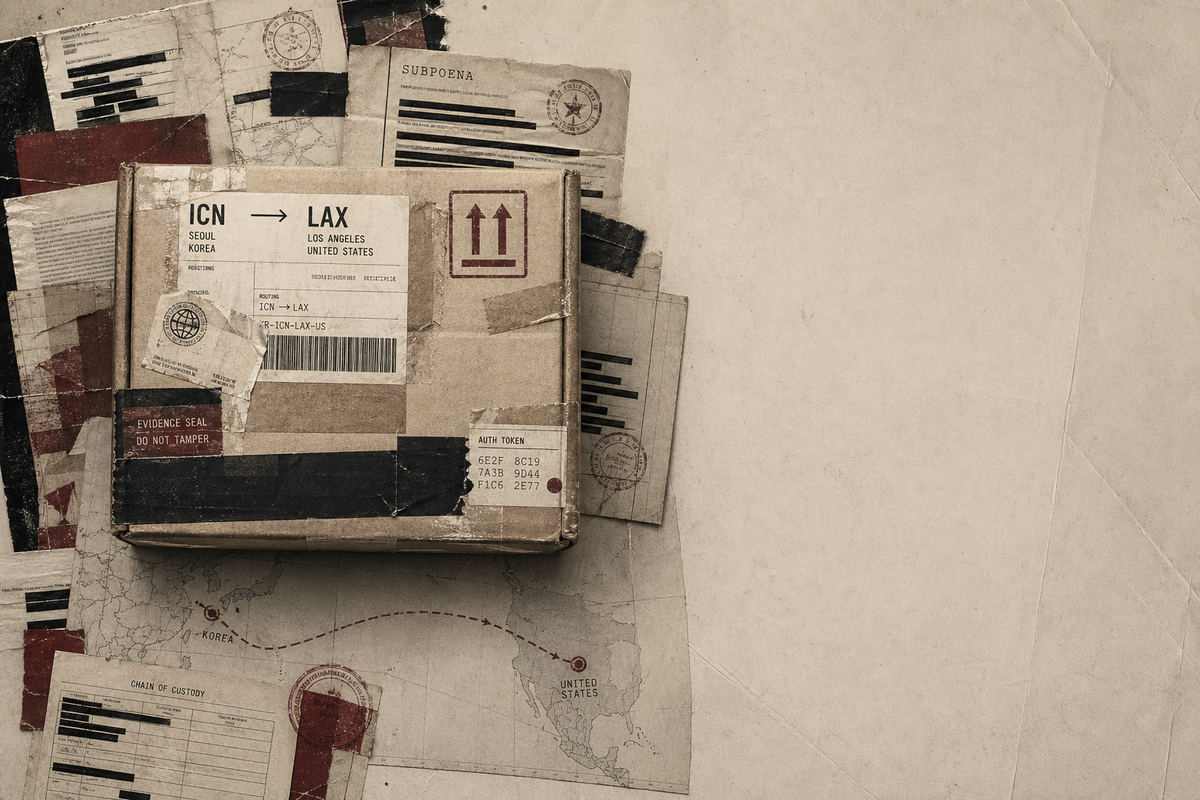

A former employee reportedly moved through Coupang's internal systems for months, pulling customer records tied to roughly 33.7 million accounts before regulators concluded the company had weak authentication controls and slow disclosure practices. That operational sequence matters more than the headline shock because it is exactly how strategic leverage accumulates: inside login flows, warehouse demand signals, delivery histories, and the records that bind them together. Seoul is treating the case as a local governance failure with national stakes. Washington is already signaling that disciplining a U.S.-listed platform can be reframed as discrimination rather than oversight.

Why this matters now

The current debate around AI sovereignty still overweights semiconductors, compute controls, and model access. Those matter, but they describe the visible hardware layer rather than the administrative terrain where states can actually impose pressure. In the Coupang case, the dispute is moving through breach findings, disclosure timing, and regulator authority, not through arguments about model weights. Rest of World captures the central tension: South Korean authorities see a platform operating on Korean behavioral data, while U.S. lawmakers see a company that deserves protection from what they characterize as unfair foreign treatment.

That shift matters because platforms like Coupang sit at the junction of retail demand, logistics routing, identity verification, payments, and consumer habits. Once those layers are integrated, a breach investigation becomes more than a privacy or cybersecurity matter. It becomes an argument over who has standing to inspect the substrate from which recommendation systems, pricing engines, fraud models, and later agentic tools will be built. That is the same larger pattern I argued in AI Sovereignty Is Splintering Into Three Models: the contest is no longer only over building AI, but over deciding which institutions govern the inputs and permissions around it.

Why the breach framing is too small

The Reuters account, republished by The Star, is striking because South Korea did not describe the incident as an especially sophisticated intrusion. Regulators said the exposure ran from April to November and reflected management failure, poor access controls, and delayed reporting. That distinction matters. If a state concludes the problem is ordinary governance weakness inside a platform with extraordinary reach, then enforcement becomes a judgment about corporate discipline rather than bad luck.

Once that judgment is made, the political meaning of the case widens. A foreign-listed company can argue that it is being singled out, but host-country regulators can answer that they are not regulating nationality; they are regulating the custodianship of data generated inside their own market. That is why the breach frame is too small. The question is not simply whether customer data was mishandled. The harder question is whether a platform can become systemically embedded in a national economy while remaining partially insulated by the diplomatic and capital-market protections of another jurisdiction.

How operational data becomes an AI sovereignty asset

Operational data becomes strategically valuable long before anyone labels it AI infrastructure. A commerce platform observes return rates by district, item substitution patterns, delivery latency, household purchase timing, promotion sensitivity, customer support interactions, and the edge cases where automation fails. Those traces are not glamorous, but they are the material from which practical machine intelligence is tuned. They improve recommendation ranking, labor allocation, route optimization, dynamic pricing, demand forecasting, and automated exception handling. In other words, they turn a retailer into a continuously updated map of how a society consumes, waits, clicks, and responds.

That is why sovereignty disputes are migrating downward from model rhetoric into operating systems of daily life. The state that can audit, localize, or threaten the business license of such a platform gains leverage over future AI development even if it never trains a frontier model itself. The adjacent debate in How AI Governance Is Splitting Between Innovation Theater and Democratic Accountability applies here: legitimacy increasingly comes from governing the environments where AI acts, not merely praising innovation in the abstract. A country that loses control of those environments may discover too late that the most important AI asset was not compute, but continuous jurisdiction over behavioral exhaust.

Who gains leverage when platforms outrun jurisdiction

Platforms that span jurisdictions can play a deeper game than simple regulatory arbitrage. They can accumulate local market dependence while preserving access to a more powerful political backstop abroad. In this case, the House Judiciary Committee has already framed South Korea's posture as discrimination against an American company and a potential trade conflict. That move matters because it converts a national enforcement action into a bilateral issue, raising the cost of local discipline without requiring the company to win the factual case on security practice.

Coupang's reported U.S. lobbying push makes the structure even clearer. A platform does not only hold data; it can mobilize home-jurisdiction institutions to defend the legal and narrative terms under which that data is governed. That creates an asymmetry. Host states may have users, warehouses, workers, and records within reach, but firms with cross-border political access can raise the matter to trade law, investment climate, or strategic competition. The same permission dynamics show up in a different register in The Next AI Supply Chain Fight Is About Who Gets Trusted Fast: the side that secures trusted standing early often shapes the market before technical debates are even settled.

What this changes for cross-border AI

For operators and investors, the implication is that AI exposure should be evaluated through jurisdictional depth, not just technical capability. Which regulator can compel disclosure? Which country can halt data transfers, freeze expansion, or demand architectural changes? Which political system will treat a platform as ordinary commerce, and which will treat it as quasi-infrastructure? These questions used to sit in the compliance appendix. They are now upstream variables in how intelligent services will be trained, deployed, and defended.

For states, the lesson is less comfortable. Once a foreign-listed platform becomes the dominant collector of domestic behavioral data, sovereignty is no longer a matter of passing AI principles or industrial policy slogans. It becomes a contest over inspection rights, audit credibility, and the willingness to absorb retaliation when enforcement touches an asset another country has decided to politically protect. That is why the next serious AI jurisdiction fights may arrive through delivery networks, customer accounts, and breach reports rather than through model bans. The unresolved issue is whether democratic governments still have the institutional stamina to govern the platforms that know their populations best before those platforms become the default operating layer for automated decision-making itself.