The OECD-GPAI Merger: How Bureaucratic Architecture Is Reshaping Global AI Governance

On July 3-4, 2024, the Global Partnership on Artificial Intelligence (GPAI) and the Organisation for Economic Co-operation and Development (OECD) announced an integrated partnership that quietly reshaped the architecture of global AI governance. This wasn't merely another memorandum of...

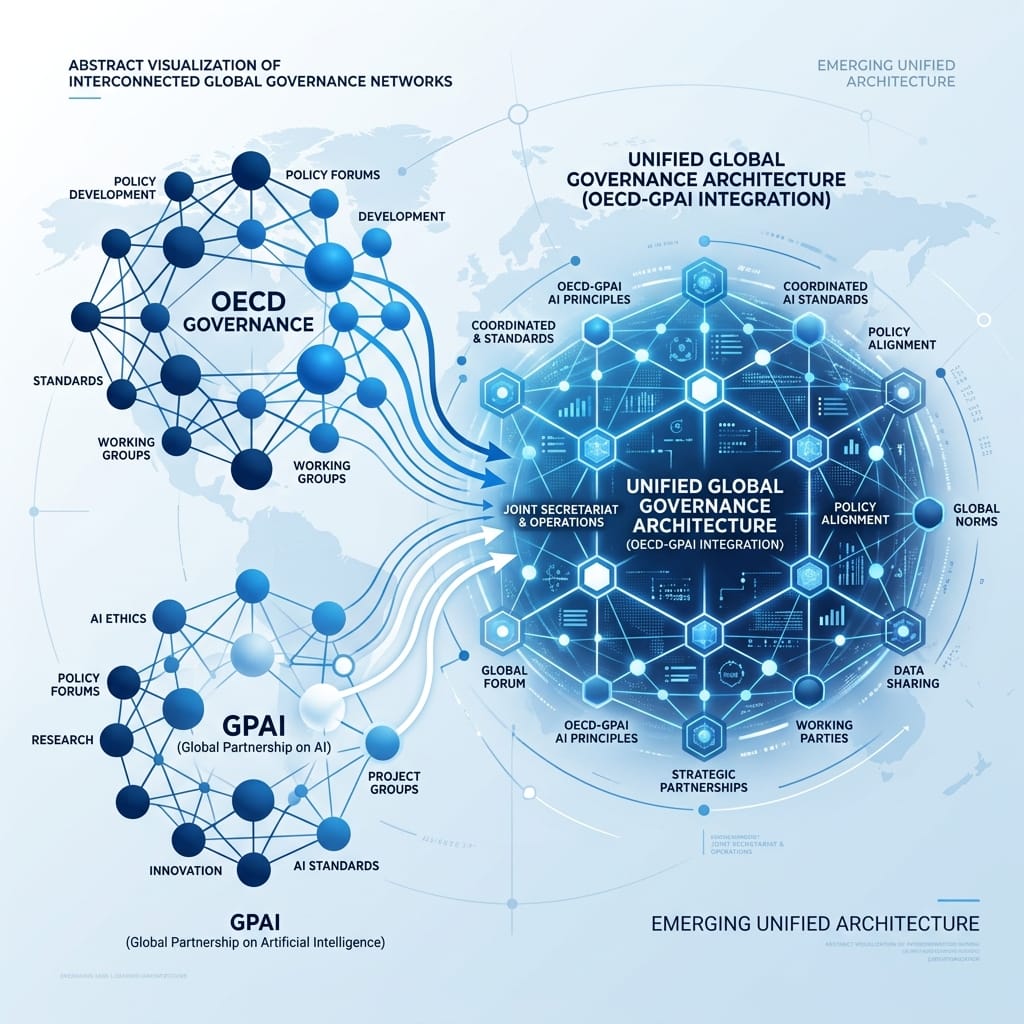

On July 3-4, 2024, the Global Partnership on Artificial Intelligence (GPAI) and the Organisation for Economic Co-operation and Development (OECD) announced an integrated partnership that quietly reshaped the architecture of global AI governance. This wasn't merely another memorandum of understanding or joint working group. It was a structural unification that merged two distinct institutional approaches under a single framework, creating what amounts to a new node in the global AI governance network.

The mainstream narrative frames this as a simple efficiency move—reducing duplication and streamlining efforts between organizations that were already closely cooperating. What most observers missed is that this merger represents a fundamental shift in how global AI governance is conceptualized and operationalized, moving from a network of overlapping initiatives to a more coherent architectural approach.

The traditional view of global AI governance presents it as a fragmented landscape: OECD's policy influence on one side, GPAI's multi-stakeholder approach on the other, with various UN initiatives, regional frameworks, and national strategies scattered in between. This fragmentation is often lamented as a weakness, with calls for greater coordination and harmonization.

What this perspective overlooks is that the fragmentation itself served a purpose. Different institutions brought different strengths to the table: OECD's deep policy expertise and consensus-building among advanced economies, GPAI's broader multi-stakeholder inclusion that brought in developing countries and civil society perspectives, and various regional frameworks that addressed local contexts and priorities.

The OECD-GPAI merger doesn't eliminate this diversity—it channels it through a new architectural framework. By bringing GPAI and OECD countries together on equal footing under the GPAI brand, while grounding the partnership in the OECD Recommendation on AI, the integrated partnership creates a structure that can simultaneously maintain policy coherence and expand inclusivity.

The key institutional innovation lies in the expert layer integration. The GPAI Multistakeholder Expert Group now joins the OECD Network of Experts on AI, creating a single expert community that pools contributions from both organizations. Meanwhile, the existing GPAI Expert Support Centres in Paris, Montreal, and Tokyo continue to operate, providing geographic distribution and specialized expertise.

This architectural approach addresses a critical tension in global governance: the need for both technical coherence and broad legitimacy. The OECD side brings rigorous policy development grounded in the OECD Recommendation on AI (first adopted in 2019, updated in 2024), while the GPAI side ensures the partnership maintains its multi-stakeholder character and global reach, now encompassing 44 countries across six continents.

The partnership's design intentionally creates bridges between policy development and research, and among countries that share similar approaches to AI opportunities and risks. By capitalizing on the extensive multi-disciplinary expertise of the combined AI expert community, it aims to reduce costs and duplication while expanding collective reach. As noted in the Brookings Institution analysis, this structure provides stability through OECD's mixed budget of stable dues and voluntary contributions, combined with flexibility to move quickly on emerging issues.

Most significantly, the integrated partnership aims to welcome new members—particularly developing and emerging economies committed to the OECD Recommendation on AI. This represents a deliberate effort to expand the governance framework beyond its traditional OECD core while maintaining ideological coherence around human-centric, safe, secure, and trustworthy AI. The New Delhi Declaration explicitly called on countries regardless of their current membership status to join this collaborative effort.

This approach builds on themes explored in our recent analysis of AI sovereignty models, where we examined how different institutional approaches to AI governance reflect competing visions of digital authority and infrastructure control as discussed in our analysis of AI sovereignty models. While that piece focused on the contrast between capital-heavy and constraint-driven models of sovereignty, the OECD-GPAI merger represents a third path: architectural integration that preserves institutional diversity while creating coordinated action.

The merger creates what amounts to a "critical node" in the global AI governance network, as described by Brookings scholars. Unlike previous attempts at creating entirely new international bodies for AI governance—which often struggled with legitimacy and resource constraints—the OECD-GPAI approach builds on existing institutional strengths while addressing their individual limitations.

This architectural innovation becomes particularly relevant when considering the partnership's role in supporting the G7's Hiroshima Process, which establishes the first international framework and code of conduct for safe, secure, and trustworthy advanced AI systems. The integrated partnership expands this framework's reach through the "Friends of the Hiroshima Process," which now encompasses 53 countries including Kenya, Nigeria, and UAE.

The bureaucratic architecture of this merger reveals a deeper truth about global AI governance: effectiveness doesn't come from eliminating institutional diversity, but from creating frameworks that can productively channel different strengths toward common objectives. The OECD-GPAI partnership isn't trying to create a single global AI regulator—it's building a more coherent network that can leverage the comparative advantages of different institutional approaches.

This matters because AI governance challenges increasingly require both technical sophistication and political legitimacy. Safety standards need expert input to be technically sound, but they also need broad acceptance to be effective in practice. The OECD-GPAI architectural approach attempts to solve this by creating a structure where policy development and multi-stakeholder engagement aren't competing priorities, but integrated functions of a single governance framework.

As global AI governance continues to evolve, the OECD-GPAI merger offers a model for how international institutions can adapt to complex technological challenges—not by seeking perfect unity, but by designing architectures that allow diverse strengths to operate in coordinated fashion. The real innovation isn't in the policy positions being advanced, but in the institutional architecture that makes those positions possible to advance coherently at global scale.

The merger's significance extends beyond immediate policy coordination. By integrating GPAI's Working Party on AI Governance with the OECD's Committee on Digital Policy, the partnership creates a direct channel for expert insights to inform policy recommendations and contribute to OECD soft law development. This addresses a long-standing challenge in global governance: ensuring that research and expert knowledge effectively translate into actionable policy.

Furthermore, the partnership's expert community integration represents "multistakeholderism at its best," combining GPAI's approach with OECD's formal mechanism for stakeholder engagement since 2008. This creates a structure where stakeholders have direct access to discussions with governments, moving beyond consultation to meaningful participation in the governance process.

The timing of this merger is particularly notable given the increasing fragmentation of global AI governance efforts. As countries pursue divergent regulatory approaches—from the EU's AI Act to the US's sector-specific guidance and China's state-led model—the OECD-GPAI partnership offers a potential avenue for maintaining coherence amidst diversity. Rather than imposing uniformity, it creates a framework where different approaches can coexist while advancing shared principles of human-centric, trustworthy AI.

This institutional innovation suggests that the future of global AI governance may lie not in creating ever-more numerous specialized bodies, but in designing smarter architectures that allow existing institutions to work together more effectively. The OECD-GPAI merger represents a pragmatic approach to governance reform: building on what works while addressing known limitations through thoughtful structural integration.