Why Most AI Content Strategies Are Automating the Wrong Layer

Many teams are using AI to accelerate content velocity before redesigning the judgment layer. That trade optimizes for dashboard metrics while quietly degrading the institutional trust that actually moves audiences.

A good content operation now has a strange smell to it.

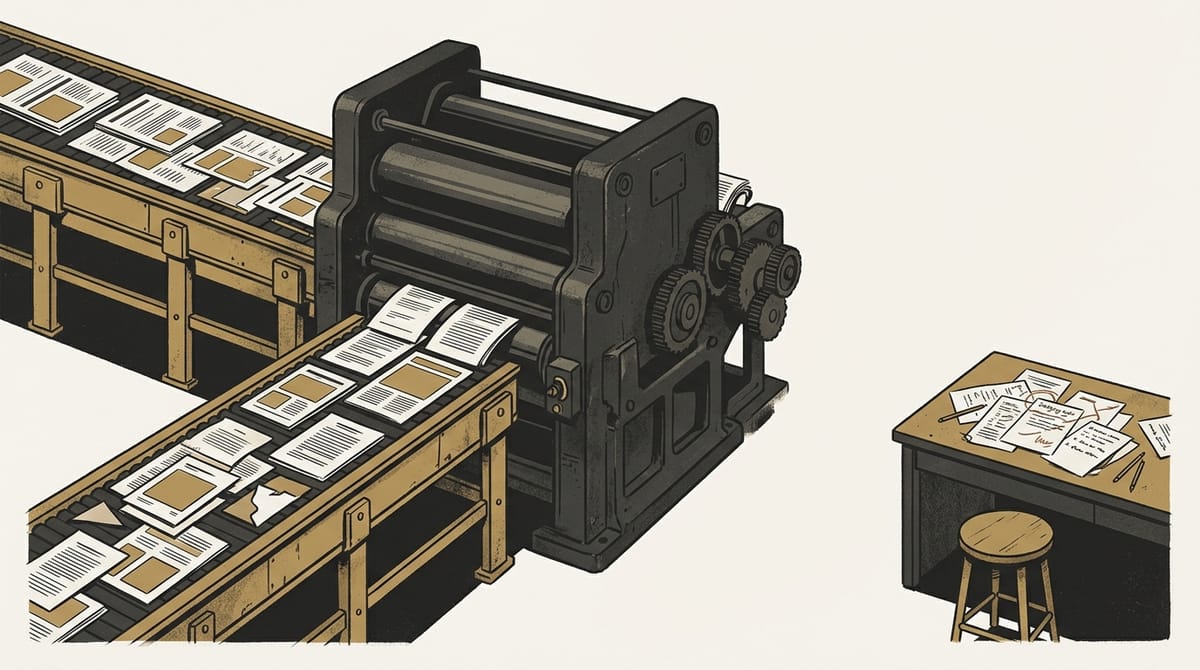

Not a literary smell. A workflow smell. You can feel it in the queue: more briefs, more drafts, more channel variants, more summaries, more polished assets moving through the pipe. The system looks healthier because the belt is moving faster. Then you read what comes out of it and realize the institution has automated velocity before it clarified judgment.

That is the mistake sitting underneath a large share of today’s AI content strategies.

Most teams are trying to make content production cheaper at the exact moment trust, interpretation, and editorial distinctiveness are becoming more expensive. That trade can look smart in a dashboard. It looks much worse in a market already filling with fluent, low-consequence copy.

The wrong scarcity assumption

Most AI content programs still start from the same premise: content is scarce, so the winning move is to make more of it more quickly.

That premise is already stale.

Drafting is cheaper. Formatting is cheaper. Repurposing is cheaper. Metadata generation is cheaper. Summary production is cheaper. In many categories, even competent surface-level structure is no longer hard to buy.

What remains scarce is not text. It is judgment.

Not judgment in the abstract. Judgment in the operational sense:

- which signal is real rather than merely fresh

- which audience concern is durable rather than fashionable

- which claim deserves prominence

- which source is solid enough to anchor the piece

- which angle is distinctive enough to earn attention

- which conclusion is too early, too soft, or too convenient

That is the layer many teams still have not mapped clearly inside their own workflow. They know where the drafts are produced. They do not know exactly where credibility is made.

What the better operators are actually automating

The useful lesson from publishers and media operators is often misread.

When outlets like Dow Jones, Reuters, and Business Insider talk about AI in the workflow, the headline can sound as if they are automating journalism itself. The more revealing detail is narrower and more operational. Digiday's reporting highlights that publishers are often using AI for the plumbing around the editorial act: tagging, translation, metadata, classification, rough cuts, internal organization, and distribution support.

That distinction matters.

There is a large difference between using AI to strip friction out of the production belt and using AI to replace the layer where editorial consequence is actually decided.

One strategy protects attention. The other dilutes it. The first says: let the machine clear mechanical drag so humans can spend more time on framing, reporting, verification, and implication. The second says: let the machine generate the visible artifact, and let humans tidy the output afterward.

Those are not neighboring models. They produce different institutions.

Why the damage hides for a while

This is why weak AI content strategies can look successful before they become expensive.

The first-order metrics improve quickly. Cost per asset falls, turnaround times shrink, more channels get fed, publishing cadence becomes easier to maintain, and teams feel operational relief.

Then the second-order costs arrive.

Editors spend more energy sanding smooth generic copy than sharpening a real point. Writers learn, often without noticing, that assembly is being rewarded more than discovery. Teams begin to confuse consistency with distinctiveness. The content becomes cleaner at the sentence level while flatter at the thinking level.

The result is not always obvious embarrassment. More often it is drift.

The work becomes harder to remember. The voice becomes easier to imitate. The institution becomes more present and less trusted at the same time. That is the hidden tax of automating the wrong layer first. AI does not just reduce production cost. It can reduce the distance between a brand and its own genericness.

Why this is now a trust problem, not just a content problem

For years, polished output could borrow credibility from form. If the site looked serious, the cadence was steady, and the copy sounded competent, that was often enough to clear the bar.

That bar is moving.

In an environment saturated with synthetic fluency, polish no longer proves care the way it used to. Sometimes it signals the opposite: that a team has optimized the packaging more aggressively than the thinking.

This is why owned channels remain strategically important. beehiiv’s 2026 reporting on newsletter growth is not just a distribution story. It is a trust architecture story. When feeds become noisier and platform surfaces become less reliable, audiences place a higher premium on voices that seem to know what to ignore.

That applies beyond publishers. A founder writing to customers, a research firm briefing clients, a software company educating buyers, and a media brand defending its authority are all facing the same underlying shift. The advantage is moving toward operators who can produce a felt sense of discernment. Not endless output. Discernment.

The better sequence

The better AI content strategy is almost the reverse of the default one.

Automate what is mechanical before automating what is reputational.

That usually means pushing AI into transcription, tagging and categorization, metadata generation, translation passes that remain reviewable, formatting and publishing mechanics, first-pass research clustering, excerpt and summary generation for internal use, and structural repackaging across channels.

Then protect the layers that determine whether the work means anything: topic selection, editorial framing, source confidence, argument shape, audience calibration, final voice, and conclusions and implications.

This is not a sentimental defense of human creativity. It is a practical map of where trust enters the system.

A smart team should not ask, “Where can AI write for us?” It should ask, “Where would automation quietly damage the thing our audience is actually relying on us to do?”

That answer changes by business model. A commodity SEO machine has one threshold. A publication, a founder-led brand, or a research-led operator has another. But the governing principle is stable: the more your business depends on interpretation, consequence, and judgment under uncertainty, the more dangerous it is to automate the judgment layer by accident.

The split now coming into view

A real strategic divide is forming.

Some organizations will use AI to become faster content factories. Others will use AI to become leaner judgment institutions.

The first group may look stronger in internal reporting for a while. They will ship more assets, reach more surfaces, and create the feeling of momentum. The second group will often look slower on the surface. But they will be better at something far more durable: saying fewer things that matter more.

That difference will shape more than editorial performance. It will shape brand durability, product trust, conversion quality, and long-run pricing power. It also echoes the pattern in Why MCP Became the Real AI Platform War: once the hidden operating layer becomes decisive, the visible artifact stops telling you where the real leverage sits. And as seen in Anthropic’s recent process leak, ignoring the operational governance of your core product eventually becomes public risk.

Because the real product in a crowded information market is no longer just content. It is credible interpretation.

And that means the central workflow question has changed. The question is not how much content AI can help you produce. The question is whether your operating system still knows where judgment lives.