The AI Is Not Guessing What You Want. It Is Averaging What You Might Mean.

Most prompting advice treats language models like stubborn coworkers. The deeper issue is mathematical: ambiguity expands the possible answer space.

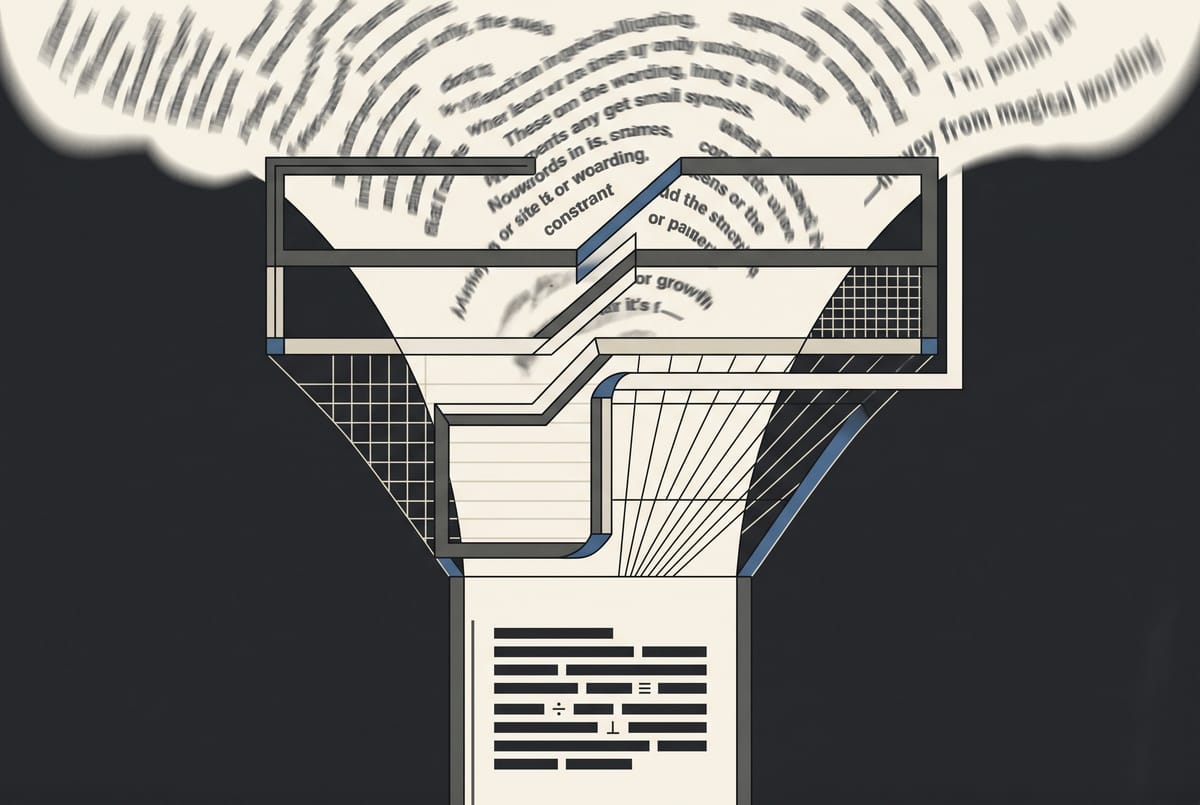

A vague prompt does not give an AI freedom. It gives it too many possible answers, so it returns the safest middle.

Generic Output Is Usually an Ambiguity Problem

Most people treat prompting as if it were a language trick. They look for the perfect phrase, the magic opening line, the hidden command that makes the model suddenly become useful.

That frame is wrong.

When an AI gives you a bland answer, it is usually not because your wording lacked flair. It is because your request left too much undecided. “Write a professional email.” “Give me ideas.” “Make this better.” Each of these prompts contains a task, but the task is under-specified. Professional for whom? Ideas for what constraint? Better by what standard?

The model fills in the missing pieces. And when it does, it does not discover your intent. It chooses a likely continuation from past patterns. The result feels generic because the prompt invited the center.

The Model Is Completing a Pattern, Not Reading Your Mind

Large language models work by predicting tokens: small units of text that may be words, word fragments, punctuation, or symbols. Given the text so far, the model estimates what should come next.

The Transformer paper introduced the architecture behind modern language models, using attention to weigh relationships inside a sequence. GPT-3 later showed that models could perform new tasks from instructions and examples in the prompt, as described in the GPT-3 paper.

Still, the basic mechanism matters. The model is not handed your intention as a private file. It receives text. From that text, it builds a probability field of possible continuations.

A vague prompt creates a wide field. “Help me plan my week” could mean a productivity plan, a wellness plan, a work calendar, a family schedule, or a motivational note. All are plausible. Under uncertainty, the model often moves toward the most common, least risky answer. That is why the output sounds like something everyone could use and no one would keep.

Constraints Narrow the Possible Answer

A useful prompt reduces uncertainty.

Constraints do not make the AI less capable. They make the task more legible. They shrink the solution space so the model has fewer plausible paths and a stronger signal about which path matters.

OpenAI’s prompting guidance emphasizes outcome, length, format, style, and context in its prompting guide. Anthropic makes the same point: Claude performs better with explicit context, success criteria, audience, and output requirements in its clear instructions guide. OpenAI’s 2026 prompting fundamentals also centers the same principle: define the task, audience, and intended use.

The deeper lesson is not “be more detailed.” Detail can be noise. The lesson is to constrain the dimensions that determine usefulness. That is also why many AI workflows are moving toward approval surfaces instead of open-ended chat boxes: the interface improves when possible actions are narrowed.

Four Constraints Do Most of the Work

A strong everyday prompt needs four things.

Objective: What should the AI accomplish? Scope: What should it include, exclude, or prioritize? Format: What shape should the answer take? Audience: Who is the output for, and what do they already understand?

These four constraints work because they map to the main sources of ambiguity. Objective defines the job. Scope prevents drift. Format makes the answer usable. Audience calibrates language, assumptions, and depth.

Without them, the model must infer too much. With them, it can spend less effort deciding what kind of answer to give and more effort producing that answer well.

The Difference Shows Up Immediately

Vague: “Write a summary of this article.”

Constrained: “Summarize this article in 180 words for a busy founder deciding whether to read the full piece. Focus only on the central argument, the supporting evidence, and the practical implication. Use three short paragraphs.”

The second prompt works because it defines use. The model is not summarizing for a student, editor, researcher, or casual reader. It is summarizing for a decision.

Vague: “Give me meal ideas.”

Constrained: “Give me five weeknight dinners for one person, each under 30 minutes, using mostly pantry ingredients. Avoid seafood and mushrooms. Format each idea as: dish name, why it works, and three core ingredients.”

This removes the model’s default tendency to produce broad lifestyle content. The answer has to fit time, quantity, ingredients, exclusions, and format.

Vague: “Make this email sound better.”

Constrained: “Rewrite this email so it sounds clear, calm, and direct. Keep it under 120 words. Do not add warmth that changes the meaning. The audience is a client who missed a deadline, and the goal is to reset expectations without sounding irritated.”

“Better” is not a standard. Clear, calm, direct, short, client-facing, and deadline-specific are standards.

Vague: “Explain inflation.”

Constrained: “Explain inflation to a 16-year-old who understands supply and demand but has not studied economics. Use one concrete example involving groceries, avoid equations, and end with the difference between rising prices and falling purchasing power.”

The improved prompt defines the reader’s prior knowledge. That single constraint changes vocabulary, pacing, and examples.

The same mistake shows up in content work, where teams often automate production before defining the layer that actually needs judgment, as I argued in automating the wrong layer.

Prompting Is Not Politeness, Persona, or Secret Syntax

Most people misunderstand prompting because they focus on surface rituals. They add “please.” They assign a grand role. They ask the model to “think step by step” when the real problem is undefined.

Sometimes those moves help. Often they decorate ambiguity.

The model does not need ceremony. It needs operating conditions. If the output must be short, say short. If the answer should ignore one topic, say what to ignore. If the reader is a CFO, a parent, a beginner, or a skeptical customer, say that. If it must fit into a slide, email, table, checklist, or script, define the container.

The mistake is assuming the AI failed to understand language. More often, it understood too many possible versions of the language.

Give the AI a Box, Not a Wish

Before sending a prompt, ask four questions.

What result do I want? What boundaries matter? What should the answer look like? Who is this for?

Then write the prompt in that order.

“Draft a two-paragraph update for my manager. Focus on progress, blockers, and the decision I need by Friday. Keep the tone factual, not apologetic. Assume she knows the project but has not seen this week’s details.”

That is not fancy. It is constrained. The model can now infer less and produce more.

The best prompts do not flatter the machine or unlock hidden intelligence. They reduce uncertainty until the useful answer becomes the most likely answer.