The Security Paradox of AI Agents: Architecting for Trust

The real constraint on AI agent adoption is not whether agents can act. It is whether organizations can bound what happens when they act wrongly.

AI agents are being framed as the next productivity layer. That framing is incomplete. The harder story is that every gain in autonomous capability also widens the number of places where a small instruction failure can become a systems failure. The adoption bottleneck is not intelligence alone. It is whether organizations can trust an agent to operate across tools, permissions, and data without turning a minor compromise into an expensive chain reaction.

Why this matters now

The current wave of agent excitement assumes that better reasoning naturally produces safer deployment. In practice, deployment risk is compounding faster than trust architecture. Unit 42 has warned that agentic systems expand the practical surface for prompt injection, tool misuse, and unintended execution because the model is no longer just answering questions. It is interpreting goals, invoking tools, and acting inside real environments. That changes the security equation from bad output to bad operations. Unit 42 details the agentic attack surface here.

Trend Micro's Pandora work points in the same direction. The problem is not only that AI systems can be fooled. It is that once they are embedded in security or business workflows, manipulation can trigger privileged action. A fragile chatbot is embarrassing. A fragile agent with access is operationally dangerous. Trend Micro's Pandora red-team writeup shows that shift clearly.

What the mainstream framing misses

Most mainstream discussion treats agent security as an extension of model safety. That is too narrow. The real change is architectural. Agents collapse interpretation, orchestration, and action into one loop. Once a system can select tools, retrieve sensitive context, and execute tasks on behalf of a user, the relevant question is no longer whether the model produces a wrong sentence. It is whether the system can contain the consequences of a wrong decision.

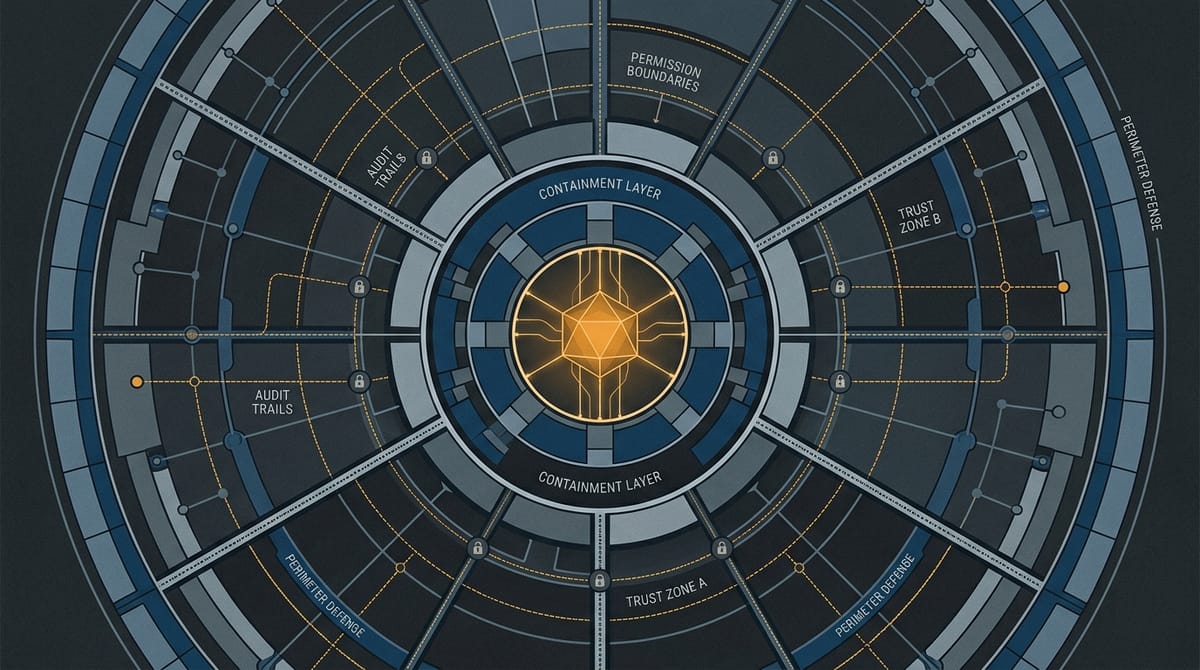

That is why this is less a frontier-model story than a control-design story. The organizations that deploy agents successfully will not just have smarter systems. They will have clearer permission boundaries, stronger audit trails, narrower execution scopes, and recovery paths when the model behaves in ways that are technically plausible but operationally unsafe.

This is the same pattern showing up elsewhere across AI. As I argued in Meta Didn't Kill Open Source — It Showed What Open Source Was For, the real battle is often not about the visible interface layer but about where control actually sits. Agent security is the trust version of that same split.

How the risk actually compounds

The dangerous feature of agents is not a single flaw. It is compositional fragility. A prompt injection lands in retrieved context. The model misinterprets it as instruction. A connected tool executes the wrong action. Excessive permissions widen the blast radius. Weak logging makes the failure hard to reconstruct. By the time a human notices, the system has converted an input-level compromise into a workflow-level failure.

McKinsey's framing of the agentic era gets closest to the real issue here: trust has to be engineered, not assumed. That means verifiable controls around what the system can do, where it can do it, and how failure is isolated when it happens. Their trust-through-engineering argument is here.

What matters is not whether attacks exist. They always will. What matters is whether the architecture turns compromise into a contained event or a distributed one. That is why the security problem of agents starts to look like governance by other means. The system is deciding, in code, which mistakes are survivable.

You can see the broader pattern in adjacent domains too. In The Next Academic Gatekeeper May Not Be a Journal Editor, the deeper shift was that governance moved from visible institution to invisible workflow design. Agent trust follows the same logic. The most important policy is often the policy embedded in the product.

What smart builders should optimize for

The builders most likely to win this wave will not be the ones who maximize agent autonomy fastest. They will be the ones who make autonomy legible, bounded, and auditable. That means least-privilege access, segmented tool rights, action verification at meaningful checkpoints, replayable logs, and architectures designed so that failure degrades locally instead of systemically.

For investors, this changes where defensibility may emerge. The opportunity is not just in another generic agent wrapper. It is in trust infrastructure: monitoring, policy enforcement, agent identity, permissioning, evaluation, and containment layers that make deployment politically and operationally acceptable. Trust is becoming a go-to-market accelerator, not just a compliance tax.

That is why The Next AI Supply Chain Fight Is About Who Gets Trusted Fast matters here. In a market full of capable systems, the scarce asset is increasingly credible assurance. The firms that can compress the trust gap will move faster than the firms that merely advertise higher capability.

Closing insight

The security paradox of AI agents is that the more useful they become, the less you can treat security as a layer you add later. Capability creates pressure to connect agents to the real machinery of an organization. The moment you do that, trust stops being a brand claim and becomes an architectural requirement.

The strategic question is not whether agents will be attacked. It is whether the systems around them are designed so that being attacked does not immediately become being owned.